The Mythos Moment is Real. The Fix-It-Faster Response isn’t.

For two decades, AppSec has been built around a familiar loop: find vulnerabilities, triage them, patch them, verify the fix, repeat. That workflow still matters. What the recent Mythos results make clear is that it can no longer carry the weight of being the primary line of defense.

The point is not just that Mythos is unusually capable. It is that recent public evaluations mark a real change in the pace and quality of AI-assisted vulnerability research.

What Mythos Actually Demonstrated

Anthropic says Mythos identified and exploited zero-day vulnerabilities across every major operating system and web browser, including a now-patched 27-year-old OpenBSD bug. In its internal testing, Mythos reached full control-flow hijack on 10 fully patched open-source targets and substantially outperformed earlier Claude models on a Firefox exploit benchmark, producing 181 working exploits where Opus 4.6 produced two.

Independent testing points in the same direction. The UK AI Security Institute reports a 73 percent success rate on expert-level capture-the-flag tasks and says Mythos was the first model it has tested to complete its 32-step enterprise attack range end to end, succeeding in 3 of 10 runs.

None of this proves every security team is about to drown in exploitable findings. What it does mean is that remediating faster, on its own, is no longer a complete answer.

What Anthropic’s Disclosure Pace Reveals

A second signal deserves equal attention. Anthropic pairs its “faster and cheaper” claims with a constraint: it will not simply dump everything it can find on maintainers at once. That is a reasonable stance for bug bounties and coordinated disclosure. It is also a tell. It assumes a world where high-quality discovery can be scaled up on demand, and the limiting factor is how much human remediation and validation capacity sits on the other side. Internal vulnerability management programs live in the same world. They may not see a sudden wall of Mythos-sourced tickets, but they do have to plan for a future where exploit discovery is cheap, repeatable, and persistent, and where “we will stay ahead of the queue” is no longer a believable plan on its own.

A New Question for Security Programs

The strategic question changes accordingly. It stops being “How fast can we patch this?” and starts being “If this gets exploited before we patch it, what can the attacker actually reach or do?”

That question points directly at containment. Network segmentation is worth its cost if a compromised service cannot laterally reach more sensitive systems. Egress controls are worth theirs if they make data theft harder and noisier. Identity scoping, ephemeral credentials, runtime controls, and tighter production-data access all pull their weight in a world where exploitation is easier to automate.

None of those ideas are new. What is changing is their relative importance. In a world where capable systems can identify and validate serious flaws faster, architectural controls stop looking like backup defenses and start looking like the thing that keeps a flaw from becoming a material incident.

Vulnerability Management, Redefined

Vulnerability management is not irrelevant in this model. It is redefined by it.

The hard question is no longer which findings to patch first. It is which findings are already meaningfully constrained by the architecture and which ones are not. That cannot be answered with a CVSS score and an asset list. It requires context: data sensitivity, identity boundaries, reachable network paths, trust relationships, and whether the controls people think exist are actually working.

Exposure Management as the Instrument Panel

This is where exposure management becomes more important, not less, and where its role shifts. A platform that can correlate findings with asset criticality, environment topology, control coverage, and real attack paths stops being a way to move tickets faster. It becomes the instrument panel for a compromise-tolerant architecture: the thing that tells you which of your existing defenses are load-bearing, which are theatrical, and which of your findings actually break containment despite them. That is a harder problem than backlog management, and a more strategic one.

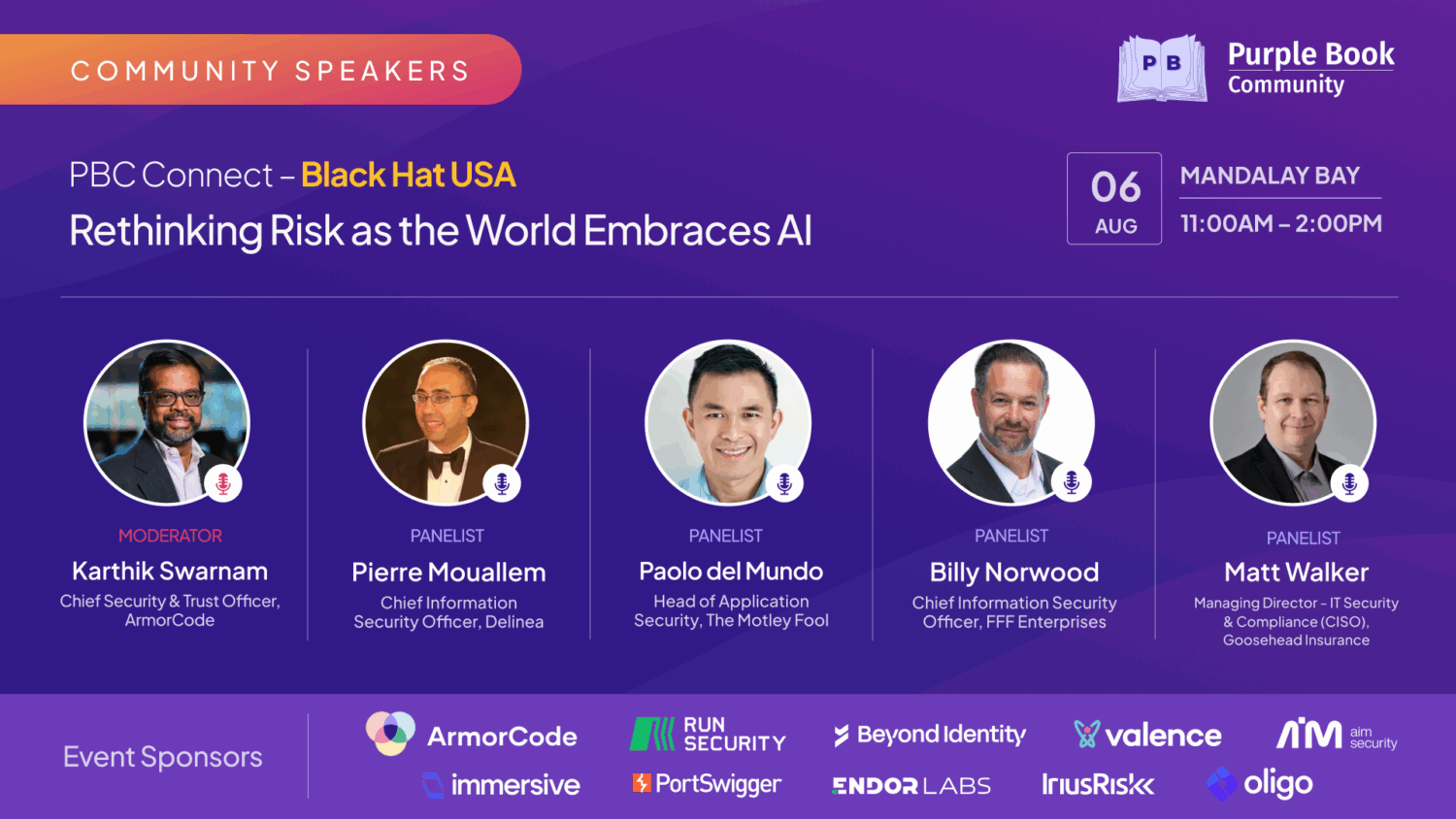

What This Looks Like in Practice at The Motley Fool

At The Motley Fool, that instrument panel is ArmorCode. The question it answers isn’t which finding scores highest. It’s which findings are actually dangerous given our environment, our asset tiers, and what’s actively being exploited in the wild. That’s the conversation that matters, and it’s a different one than moving tickets faster.

Three Implications for Security Programs

Following are a handful of practical implications.

Rethink What MTTR Is Measuring

MTTR stays on the board, but it should not remain the headline metric in every context. Faster remediation is good. Faster remediation of issues already contained by architecture is a program running the wrong race at a faster pace.

Test Architecture Like You Test Code

Architectural controls need to be tested with the same seriousness as code. Segmentation, egress filtering, and identity boundaries often look stronger in diagrams than they do under pressure. Red-team the architecture, not just the application.

Connect Findings to Real Exposure

The environment context becomes decisive. Programs that can connect findings to real business and technical exposure will make better decisions than programs still prioritizing on scanner output plus severity labels. If you are treating an exposure graph as a triage accelerator, you are underutilizing it.

Why ArmorCode Anchors This For Us

ArmorCode helps us connect findings to real business and technical exposure rather than scanner output and severity labels. The question it answers is not which finding is technically the worst. It is which finding actually matters in our environment, given our architecture, our data, and our controls.

Where This Leaves Us

Mythos is not the end of patch-centric security programs. It is the end of patching faster, by itself, being a complete answer. The programs that matter five years from now will be the ones that treat containment as a primary control and remediation as one part of a broader system. The ones still optimizing the funnel as their primary defense will be the case studies.If you’re wrestling with these questions in your own program, I’d love to compare notes. I write about AI, AppSec, and MCP-driven security tooling on my blog at mcpandchill.com, and you can find me on LinkedIn as Paolo del Mundo.